May 29, 2026

Opus 4.8 got released yesterday during our workshop. Very interested in the new “dynamic workflows” feature in Claude Code.

Quick thoughts, observations, and links — a microblog by Jeroen Gordijn

Opus 4.8 got released yesterday during our workshop. Very interested in the new “dynamic workflows” feature in Claude Code.

Today me and JD were at the Beyond Coding Podcast. Very nice setting and conversation. Will be online in a few weeks.

Interesting take by Jensen Huang: “if you have abundance of energy, it makes up for chips” China has almost unlimited energy. They don’t need the newest chips.

Discovered https://github.com/Infisical/agent-vault. I can now create a proxy in my Tailscale network and have all credentials to external services stored there. This way credentials can no longer be leaked by the AI Agent

Seems like GitHub Copilot will move to token-based billing. This makes good sense as the request-based model is misaligned with reasoning selection and model changes, where smarter models use fewer tokens but are more expensive per token. Source: https://www.neowin.net/news/report-github-copilot-is-moving-to-token-based-billing-from-june/

Playing around with my personal assistant via Hermes. Gave it GPT 5.5 and it is a very nice help. I named him Bender after the robot in Futurama.

GPT 5.5 got released yesterday and today we get DeepSeek v4. Opus 4.7 is getting bad press and the open models are getting way better.

Opus 4.7 seems much better at UI than GPT 5.4. I spent today trying multiple times with GPT to fix a UI bug, but it kept failing. After just one shot with Opus, it was fixed.

Kimi K2.6, Qwen 3.6 Max. Did the open source models already catch up with OpenAI and Anthropic?

Benchmarks are insane: Qwen 3.6 Max and Kimi K2.6

Opus 4.7 is released. I wonder if it will be my new daily, replacing GPT 5.4.

What a 7.5x multiplier in GitHub? Check out the announcement

Adding proof to GitHub PRs in the form of screenshots really helps in the review process—and it helps the robot prove its own work too.

Meta released Muse. Are they joining the AI race (again)?

OpenAI now also has a €100 subscription. It also gives access to the Pro model.

Anthropic Mythos is scarily powerful. It is not publicly released, but it shows what’s coming.

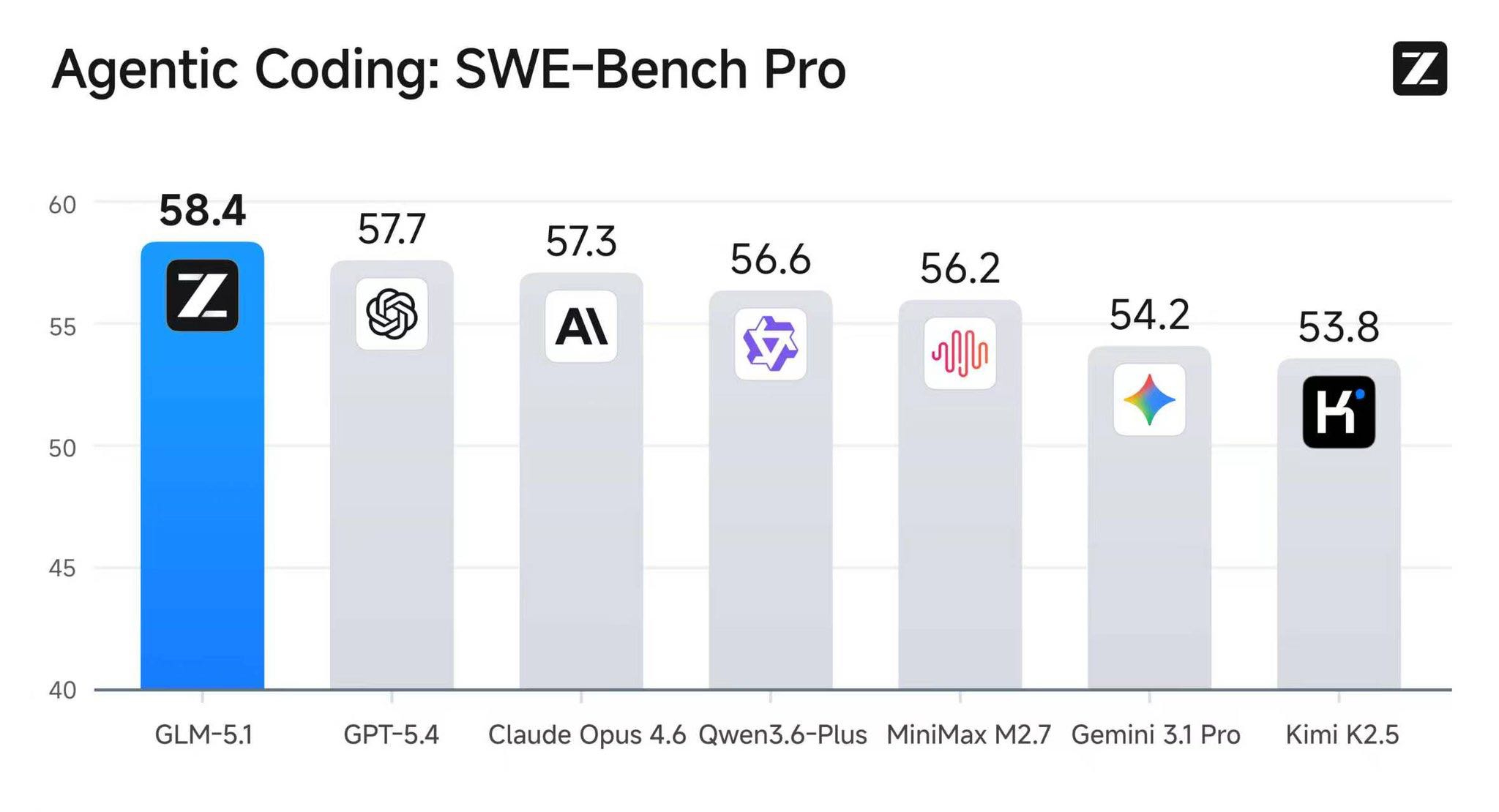

GLM 5.1 apparently outperforms Opus 4.6 on the SWE benchmarks.

Very interesting project: Glasswing

Using AI for the greater good, before handing it to the internet hackers and scammers.

Gas Town by Steve Yegge is now v1.0: https://steve-yegge.medium.com/gas-town-from-clown-show-to-v1-0-c239d9a407ec

GLM 5.1 pelican is pretty good: https://simonwillison.net/2026/Apr/7/glm-51/

With Mythos a bug in OpenBSD was found that exists already for 27 years :flushed:

Tried out Qwen 3.6 Plus for a bit. I must say, I’m quite impressed. Fast and good.

It’s no longer allowed to use the Claude subscription with any harness other than Claude Code or their desktop. It still seems to work with Pi, but you risk having your account blocked.

The check to see if you’re using the correct harness with your Claude subscription seems to be: look at the system prompt and check if certain sentences appear. If so, block!

Another Chinese model - Qwen 3.6 Plus - that is performing quite well according to the benchmarks. Need to check for myself. — source

Claude Code source code got leaked by Anthropic. 500k lines of code.

Claude Mythos. Will we see a new model by Anthropic soon? Exclusive: Anthropic acknowledges testing new AI model representing ‘step change’ in capabilities, after accidental data leak reveals its existence

Running tools via bunx/npmx instead of installing via ‘bun install’. Always running latest version.

ARC-AGI-3 is out. All models score <1%. I’m curious to see how this will hold up in 2026. https://arcprize.org/arc-agi/3

OpenCode is making their features into plugins. This will seriously boost the plugin system. Will OpenCode become as customizable as pi? It allows users to build their own custom versions. I think this is where more and more software is headed.

Voxtral TTS by Mistral looks interesting. Need to look at it. Seems easy to clone your voice and use it in TTS.

Anthropic subscription no longer works in opencode.

Claude code can now control your computer. https://x.com/claudeai/status/2036195789601374705?s=46

Interesting. Are we going to remove all skills? I guess just the generic skills. I think company specific skills or references will stay relevant.

New product by Google: Google Stitch. Design a website or app with AI. Good video by Fireship. Tried it out and it seems really impressive.

I’ve written a new blog post! Put some effort into Closing the Loop

Seems like an interesting project: plannotator — make notes and submit to your agent

Nice blog Grief and the AI Split. I see many people who are resisting AI, because they feel love and pride in writing code. I understand. It feels painful losing the thing you really love. I hope many will find new joy in using AI, because that is the way we are heading.

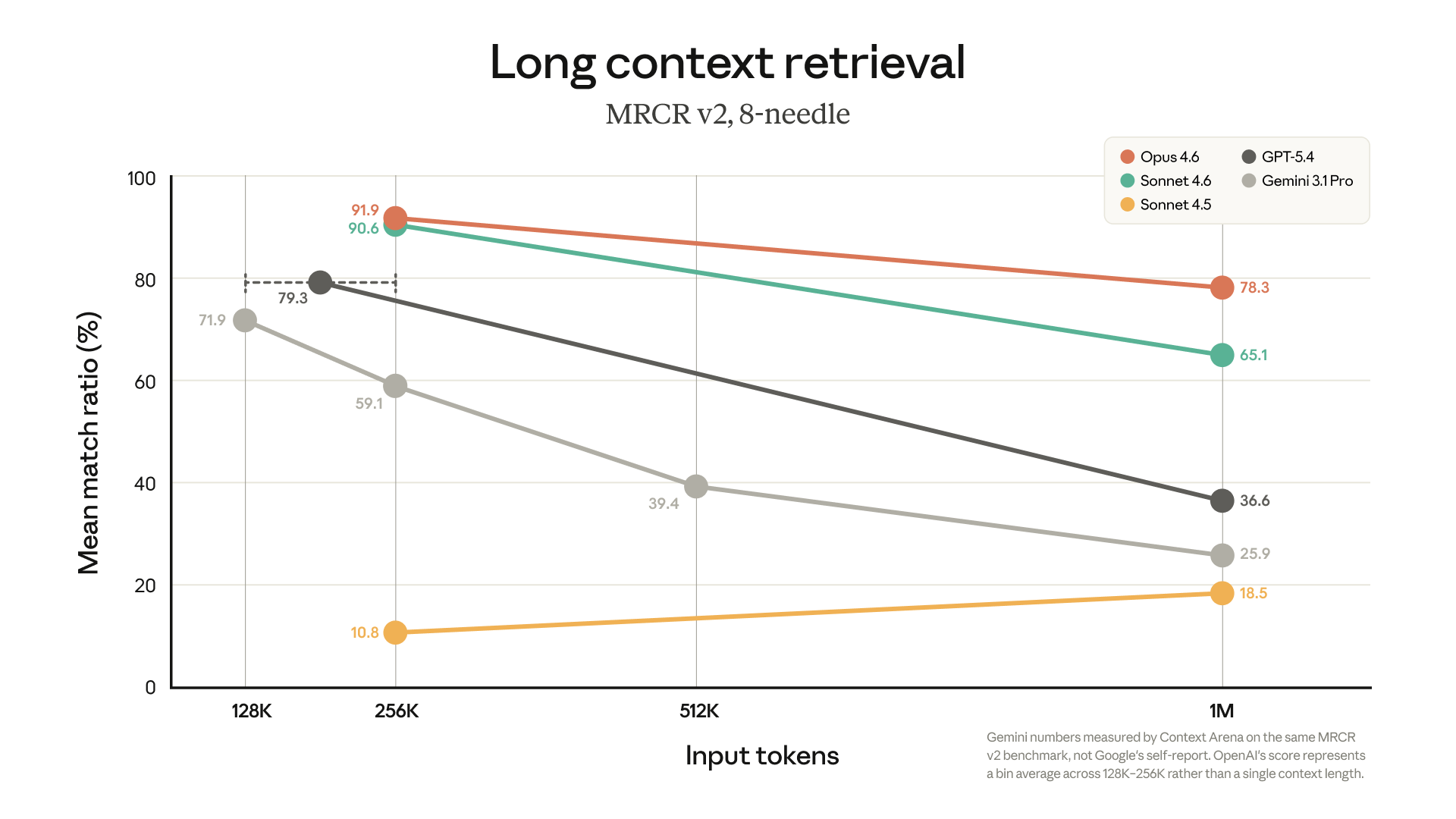

Anthhropic just dropped 1M context window by default. No more extra pricing.

Retrieval is also really good.

Blind taste test to research if people prefer AI written content, or human written content: https://www.nytimes.com/interactive/2026/03/09/business/ai-writing-quiz.html

After 86.000 responses about 54% preferred the AI content: https://x.com/kevinroose/status/2031397522590282212?s=46

Very interesting quote by Yegge: Still using your IDE? You’re gonna get fired

Context engineering starts with the system prompt. How System Prompts Define Agent Behavior

Very interesting article and it shows again how important it is to work with the model, not the tool. We really should focus on tweaking how we address the model. Deliberate continuous practice.

Working with AI is more like learning to play the guitar than learning how a tool works.

Nice article to go with the previous one: Weird system prompt artefacts. The system prompts seem to be fighting against the default model’s behavior.

If you let the agent perform manual tests, can you still call it manual tests?

Codex, File My Taxes. Make No Mistakes. How AI outperformed the human accountant*.

Finally took the time to read Welcome to the Wasteland: A Thousand Gas Towns. Very interesting stuff. Earning credits based on actual performed work. I still don’t fully understand what the Wasteland is or how to apply it, so I’m adding it to my backlog to dig deeper.

Also, Gas Town is now ready for use. In these changing times, I think we need new ways of working, and the Wasteland and Gas Town seem to facilitate that.

OpenAI api now has websockets. Nice explanation by Theo - t3․gg on YouTube

This is a great improvement, and I’m looking forward to the other providers supporting this and seeing what it will do for my workflow. Apparently, a 20%-40% speed improvement.

GPT 5.4 dropped. This will be an insane year if this goes on.

Seems like Donald Knuth had his “oh-shit” moment:

Shock! Shock! I learned yesterday that an open problem I’d been working on for several weeks had just been solved by Claude Opus 4.6

https://www-cs-faculty.stanford.edu/~knuth/papers/claude-cycles.pdf

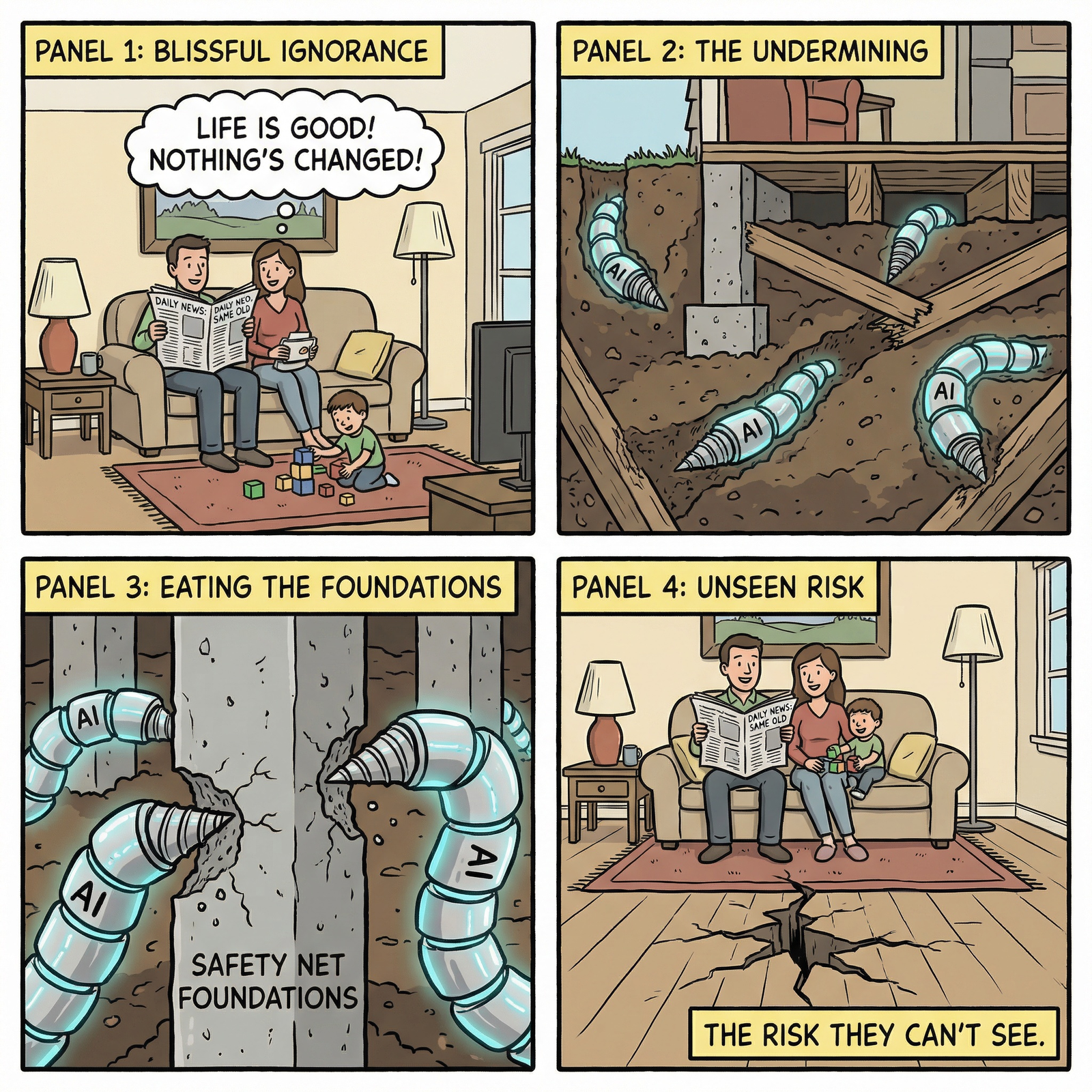

People calling AI “a tool” are completely missing what’s happening. The whole industry is shifting.

OpenCode is working on workspaces. Turn your agent into a control pane. Interesting.

NOS news item on AI in Software Development: “Nothing is happening, just another tool.”

Very interesting to hear Amodei explain their standpoint: Full interview: Anthropic CEO responds to Trump order, Pentagon clash

Love the new Obsidian Headless sync. Made my automation of auto-tagging notes much easier. I no longer need a VM with X on it.

Received a new KPN TV+ Box, but the video was stuttering. After a back-and-forth and debugging session with Gemini, I discovered that IPv6 needs to be enabled on my FritzBox. Googling this would have taken a long time. No support desk needed.

Brutal blog post by Geoffrey Huntley:

If your company has banned AI outright, you need to depart right now and find another employer.

The world is changing and people should no longer identify as programmer:

This is going to be a really hard time for a lot of people because identity functions have been erased, and the hard thing is, it’s not just software developers. It’s people managers as well. If your identity function is managing people, you need to make adjustments. You need to get back onto the tools ASAP.

This is a must read and wake-up call for anyone thinking nothing is happening.

People talk about liking one model or another, then say they’ve been using it for months. You should really try new models every time they come out and see if your verdict still holds.

Department of Defense threatened to remove Anthropic from their systems and mark them as a “supply chain risk.” if Anthropic does not let above points go.

Regardless, these threats do not change our position: we cannot in good conscience accede to their request.

Linear walkthroughs are an interesting idea. Need to test that tomorrow on a service I’ve implemented.

Tried the Linear walkthroughs today. That is really cool! The only problem I had was missing syntax highlighting. Luckily, my clanker came up with a 2-step solution for that. Really nice to have a guide to read the code.

I’ve been using GPT-5.3-Codex throughout the day. The context window of 400k vs the 120k of Opus (in GH Copilot) makes a real difference. Getting great results, and I’m starting to like GPT-5.3-Codex more and more. Would be nice if Opus on GH Copilot got a bigger context window too. I guess the request model is holding GH back.

Extended my pi today. Widget that shows what Skills got loaded & updated the statusline to show git status.

I don’t need to install pi (or any other agent) on my servers when it can just ssh into the machine and do its work 💡

After noticing how people can just replicate software, tldraw is now removing the tests from the open source

Anthropic is making big moves in computer use. Last week Sonnet 4.6 was released with better computer use capabilities. Now Vercept got acquired.

Moving tests of tldraw to closed source was apparently a joke

Every good model understands “red/green TDD” as a shorthand for the much longer “use test driven development, write the tests first, confirm that the tests fail before you implement the change that gets them to pass”.

IDEs are dead. Just thought of a new term. We will be working in an AME (Agent Management Environment).

15k tokens/sec is insane: https://chatjimmy.ai

Pi just added this:

• Added default skill auto-discovery for

.agents/skillslocations. Pi now discovers project skills from.agents/skillsincwdand ancestor directories (up to git repo root, or filesystem root when not in a repo), and global skills from~/.agents/skills, in addition to existing.piskill paths.

Nice improvement. OpenCode supports it too. It would be nice if it didn’t stop at the Git repo root, but kept going up instead. That would allow for ~/projects/clientA/.agents and ~/projects/clientB/.agents — all projects for a specific client could inherit the correct skills.

I just made a release of agentdeps with .agents support for pi and opencode: jgordijn/agentdeps:0.5.0

I wonder why people who are pro-AI feel the need to tone it down by saying that AI still makes errors. You still need to check. AI cannot do this or that. It’s a user problem, not an AI problem. As JD says: PICNIM (Problem In Chair, Not In Model)

I can relate to this post by Simon Willison. I sometimes find myself searching for something I think I made. Did I make it? Where did I do it?

MCPs are a way to restrict what an Agent can do. Not to give it more power: MCP to restrict agents

And another model is released. Gemini 3.1 Pro. And Simon made another nice writeup. I wonder what the rest of 2026 will bring.

Found on JD: The Coding Agent Is Dead. Amp may be ahead of the curve, but if you’re still working in the IDE, you’d better pay attention. You’re doing it wrong. The CLI is also not it, but it is for now. Amp is going to self destruct the vscode extension. How cool!

They also mention this:

Think of it as a ladder: we use it to climb up to the next level and then we might not need it.

That’s why I made The AI Coding Ladder

Nice quote from the same article:

In a system where agent throughput far exceeds human attention, corrections are cheap, and waiting is expensive.

A Guide to Which AI to Use in the Agentic Era by Ethan Mollick. Using AI is no longer about talking back-and-forth with the chatbot, but assigning tasks to your agent.

The shift from chatbot to agent is the most important change in how people use AI since ChatGPT launched. It is still early, and these tools are still hard to figure out and will still do baffling things. But an AI that does things is fundamentally more useful than an AI that says things, and learning to use it that way is worth your time. Ethan Mollick

Building my own RSS reader with AI summary and filtering. Using Minimax via OpenRouter is a massive cost reduction compared to using Anthropic Sonnet.

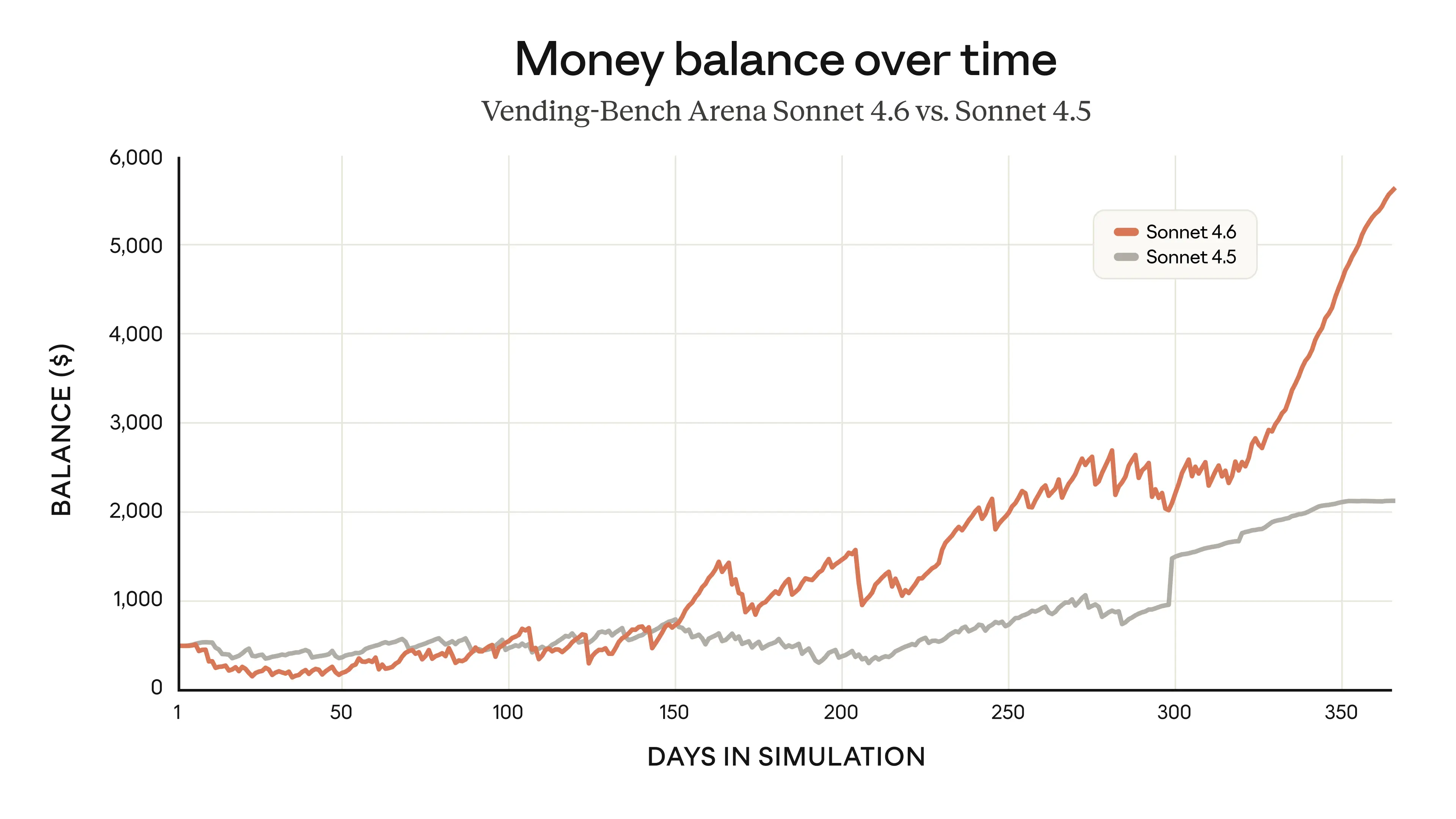

Sonnet 4.6 dropped. Nearing Opus 4.6 for lower cost. Better than Sonnet 4.5 and Opus 4.5. Most information is about computer use, so apparently it’s very good at that.

Just saw this in JDs moments:

Agents optimize for outcomes, not attention.

Via https://x.com/garrytan/status/2023773343124537411?s=46

We need to build good services to win. Not the most popular. 🤔 Interesting thought!

One of the earliest lessons we learned was simple: give Codex a map, not a 1,000-page instruction manual.

OpenAI Harness engineering. This map/index keeps coming back. We need to provide the robot with indexes so it can discover the information it needs. JD is on the right track with his markdown references approach.

So instead of treating

AGENTS.mdas the encyclopedia, we treat it as the table of contents.

Peter Steinberger (creator of OpenClaw) is joining OpenAI to work on personal agents. OpenAI will apparently support OpenClaw. Sam Altman on X

Spotify says its best developers haven’t written a line of code since December, thanks to AI

The question isn’t whether AI matches the very best engineer you know. It’s whether it’s better than the average engineer.

Jarred Summer asking for issues to fix on X:

if you have a bun GitHub issue open for awhile with a clear reproduction, reply with a link below & I will have Claude try to fix it today

And a whole bunch of issues got fixed.

And for AI to have a dramatic, nothing-will-be-the-same impact on software as an industry, it doesn’t need to be better than the best engineer you know. It only needs to be better than the average.

https://registerspill.thorstenball.com/p/joy-and-curiosity-74

OpenAI introduced GPT-5.3-Codex Spark which can run at 1000 tokens/sec. But this has little value if your tech stack is slow in compiling and testing. Working with Kotlin and Spring with Maven, this is becoming a real pain.

I’m the bottleneck now: https://x.com/thorstenball/status/2022310010391302259?s=46

For the first time, I can see myself actually doing some work on an iPad. Echo SSH is really nice. SSH into the dev server and start pi inside tmux and you’re off.

Pi has /export. It creates a nice export of the session to an HTML file.

You build it, you own it. The cost of ownership in The vibe coding trap. Can’t the issue mentioned be mostly mitigated by a bot that inspects the internet for regulation changes and other shifts that impact your code? Of course, a fool with a tool is still a fool. Just blindly copying with AI will lead to issues.

The open-weight models are getting really good. All Chinese: Kimi K2.5, GLM 5, MiniMax. How long until we can really run these open-weight models ourselves? It performed really good on the vending machine simulation, with a different strategy than other models, investing more in the beginning, to later pivot and become really profitable.

Lead by example by Steve Yegge to get a better health balance: https://steve-yegge.medium.com/the-ai-vampire-eda6e4f07163

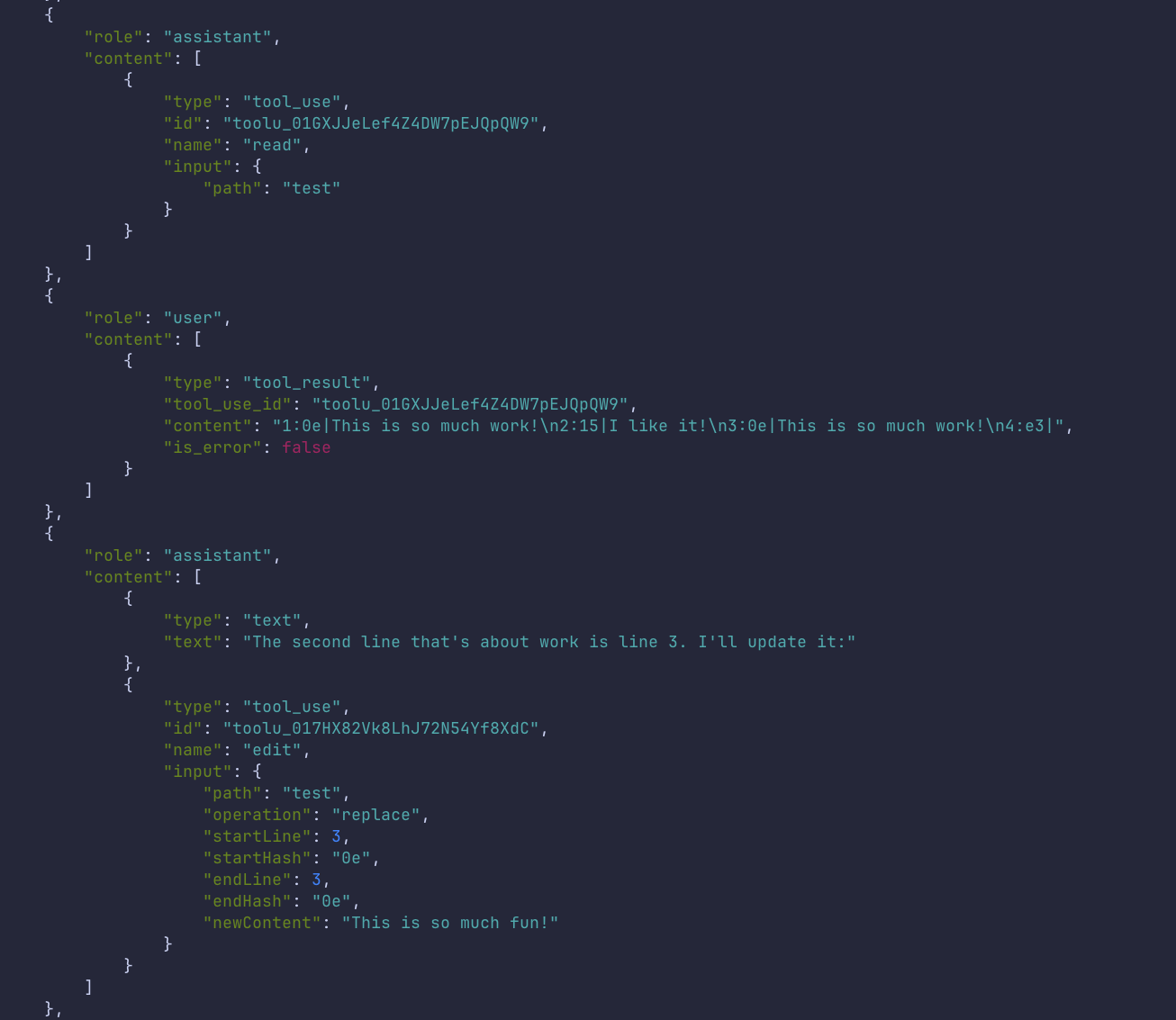

After reading this article: https://blog.can.ac/2026/02/12/the-harness-problem/ (see earlier moment) and seeing the conversation of JD with Mario Zechner on X https://x.com/jeroendee/status/2022099377674911838?s=20. I fixed my clanker:

Added an events page to the website: https://inspired-it.nl/events/

JD pointed me to deepwiki.com. Really awesome. Here’s the documentation for agentdeps: https://deepwiki.com/jgordijn/agentdeps

This is actually a really good find! The edit tool is quite “simple” in how it searches and replaces, and apparently we can make it much better.

More accurate, fewer tokens: https://blog.can.ac/2026/02/12/the-harness-problem/

If you look at the security measures in other coding agents, they’re mostly security theater. As soon as your agent can write code and run code, it’s pretty much game over.

https://mariozechner.at/posts/2025-11-30-pi-coding-agent/

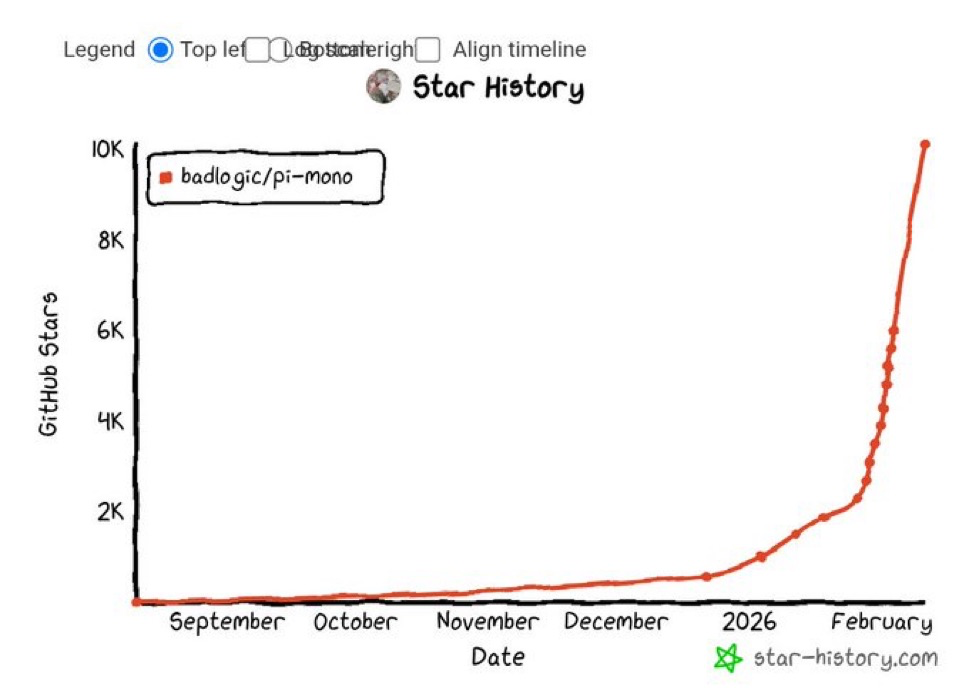

The rate at which new projects get popular is amazing.

Quite a wake up call:

I am no longer needed for the actual technical work of my job.

After a remark about missing dark mode I gave the following prompt to Claude mobile app, in the code tab:

The website has no dark mode. I need the site to have a dark mode. It should listen to system preference by default, but it should also be possible to toggle manually. The toggle should be easy to see but not too much in your face. Make a PR for this.

Now the site has dark mode!

You build code with AI and you have a lot of information in the context how and why it is built this way. But after the session we throw this away. No more! Commit the session together with the code. New project by former GitHub CEO (Thomas Dohmke). Funded with $60mln in the seed round.

Pi is such a cool project. It makes me think in whole other ways about working with LLMs. No more magice, all logic is put in place by me. I still like OpenCode as well. But using Pi I now better understand what’s happening.

AI may make you more productive, but working more and more… I’m not alone: https://x.com/simonw/status/2020901645597683870?s=46

This shows the power of Pi, if you can imagine it, you can build it: https://github.com/nicobailon/pi-prompt-template-model

Building a little bot for slack with AI using Claude Opus 4.6. Without asking, it builds integration with OpenAI’s API. Why didn’t it favor Claude SDK? Now that I switch to OpenRouter it becomes apparent that OpenRouter and Ollama are all using the OpenAI API, so I think OpenAI API is just more common.

Just set up a Slack bot in about 1h to add messages to my moments (https://github.com/jgordijn/moments-slackbot). This message was created via Slack. The power we get! An idea is now realized in no time 💪

In rule form: • Code must not be written by humans • Code must not be reviewed by humans Finally, in practical form: • If you haven’t spent at least $1,000 on tokens today per human engineer, your software factory has room for improvement

Nice. No more coding by hand. No more reviewing. Tokens as a measure of effectiveness 😎

Tests can be reward hacked - we needed validation that was less vulnerable to the model cheating

Building a digital Twin of services to allow for massive integration testing. Create tests, not accessible by the agents. Instead of a Boolean test, write a probabilistic and empirical one. 🤯

Source: https://factory.strongdm.ai

Going to experiment with adding a microblog to the site. The idea is simple: quick notes and observations that don’t need the full treatment of a blog post. Sometimes you just want to jot down a thought. Inspired by the “Moments” section on aishepherd.nl by Jeroen Dee.

How to secure your agent? You can’t. You can try to put some instructions, but if it has bash access, it can just do about everything.

A huge part of working with agents is discovering their limits. The limits keep moving right now, which means constant re-learning. But if you try some penny-saving cheap model like Sonnet, or a second rate local model, you do worse than waste your time, you learn the wrong lessons.

Nice hot take. Use the best models before complaining LLMs don’t work.